The World's Most Dangerous AI Tool Has a Possible Breach on Its First Day. Here's What Everyone Is Missing.

Anthropic spent weeks vetting who was trustworthy enough to access Mythos. According to Bloomberg, a small group of unauthorized users reportedly accessed it on the same day the decision was made public.

(This article will be updated as Anthropic's investigation produces findings. Last updated: April 23, 2026.)

On March 26, 2026, a routine configuration error inside Anthropic's content management system accidentally exposed nearly 3,000 unpublished internal files to the public internet, including a draft blog post announcing what Anthropic described as "by far the most powerful AI model we've ever developed." The model's name: Claude Mythos. Eleven days later, Anthropic moved from accidental disclosure to deliberate launch.

Fast forward to April 7, 2026, Anthropic launched Project Glasswing, a controlled release of Claude Mythos Preview to twelve named launch partners: Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. The model had already found thousands of high-severity vulnerabilities in every major operating system and web browser. Anthropic's position was direct: AI has reached a level of coding capability where it can surpass all but the most skilled humans at finding and exploiting software vulnerabilities.

Anthropic is now investigating a possible breach of Mythos. The company told CBS News it is "looking into a report of unauthorized access to Mythos from one of its third-party vendor environments." So far, Anthropic has not detected any breaches outside of its vendor environment or any compromises to its systems.

Anthropic confirmed its investigation on Wednesday, April 22, a day after Bloomberg reported that a small group of unauthorized users had gained access to the tool, citing a person familiar with the matter. Bloomberg's reporting, corroborated by TechCrunch and SiliconAngle, alleged the group accessed the model on the same day Glasswing was announced. The users reportedly gained entry through the credentials of a member of the forum who works for a third-party contractor that evaluates Anthropic models, combined with details from a data breach at AI recruiting and training startup Mercor Inc. to locate the model.

- They did not use a zero-day exploit.

- They did not breach Anthropic's core infrastructure.

- They used a contractor credential, a URL pattern guess, and intelligence from a recruiting startup's prior breach.

Federal officials, security experts, and leaders at global institutions including the International Monetary Fund have raised concerns about what might happen if Mythos falls into the wrong hands. While Project Glasswing is intended to help companies insulate themselves from cybersecurity threats, some experts are concerned that Mythos could also be used to exploit IT infrastructure at banks, hospitals, government systems, and other organizations.

Alissa Valentina Knight, CEO of cybersecurity AI company Assail, put it plainly to CBS News: “We need to prepare ourselves, because we couldn’t keep up with the bad guys when it was humans hacking into our networks. We certainly can’t keep up now if they’re using AI because it’s so much devastatingly faster and more capable.”

She is right. And the breach data from Anthropic's own selected partner list explains exactly why.

What the breach data says

So, we went ahead and built a comprehensive incident database covering all 12 named Glasswing launch partners.

The result: 44 documented cybersecurity incidents across organizations collectively responsible for protecting the infrastructure you use every day.

Every single Glasswing partner has experienced material breaches. Not minor incidents. Nation-state intrusions, ransomware attacks, supply chain compromises, and data exposures affecting tens of millions of people.

In late November 2023, the Russian state-sponsored threat actor Midnight Blizzard used a password spray attack to compromise a legacy non-production test tenant account at Microsoft, gaining access to a percentage of corporate email accounts including members of the senior leadership team and employees in cybersecurity and legal functions. And that, ladies and gentlemen, is the same class of credential vulnerability alleged in the Mythos incident.

In 2023 and 2024, Cisco suffered two separate incidents. An unpatched IOS XE vulnerability compromised devices at telecommunications companies across the U.S., and a state-sponsored group labeled UAT4356 exploited vulnerabilities in Cisco's Adaptive Security Appliances to install backdoor implants. The breaches collectively compromised data related to approximately 40,000 Cisco devices.

In August 2025, Palo Alto Networks confirmed it was impacted by a supply chain attack targeting the Salesloft Drift application that gave hackers access to downstream customer Salesforce data. Zscaler, a rival cybersecurity firm, disclosed a similar breach stemming from the same attack, affecting hundreds of organizations simultaneously.

In November 2025, Google said hackers stole data from more than 200 companies following a breach of Gainsight, a customer support platform integrated with Salesforce. The hacking group Scattered LAPSUS$/ShinyHunters claimed responsibility.

And we can’t forget about CrowdStrike. On July 19, 2024, CrowdStrike distributed a faulty update to its Falcon Sensor software that caused roughly 8.5 million Windows systems to crash in what has been called the largest outage in the history of information technology. This was personal since I was stranded in an airport for hours looking at everyone looking at every monitor that displayed the blue screen of death. That outage disrupted airlines, airports, banks, hospitals, and emergency services worldwide.

No external attacker required. CrowdStrike took down 8.5 million systems by itself. That is the endpoint security company Anthropic selected to help defend critical infrastructure from Mythos in the wrong hands.

We’re not listing these incidents to embarrass anyone. We’re listing them because they are the factual foundation of a specific argument: the organizations Anthropic chose as the responsible stewards of the world's most dangerous AI model cannot consistently secure their own networks, their own devices, or their own third-party vendors.

The "trusted partner" model of AI safety governance has a 100% documented breach rate among the organizations selected to prove it works.

That is not a coincidence.

That is a finding.

The number you need to remember

Over the weeks before the April 7 announcement, Anthropic used Claude Mythos Preview to identify thousands of zero-day vulnerabilities in every major operating system and every major web browser, along with a range of other important pieces of software. The 1% of vulnerabilities publicly mentioned in the April 7 disclosure represents only a fraction of what was found; the remaining vulnerabilities are still in the patching process. That means over 99% of what Mythos identified remained unpatched when the possible breach occurred.

Mythos did exactly what it was designed to do. It produced a comprehensive map of the attack surface that billions of people depend on every day. The question Anthropic cannot yet answer publicly is whether the group that allegedly accessed the model also accessed that map.

Anthropic has stated it has not detected any breaches outside of its vendor environment or any compromises to its systems. The investigation is ongoing. But the patch timeline, as well as punchline, tells you how long exposure windows stay open regardless of any single incident. Broadcom confirmed that a critical heap-overflow vulnerability in VMware vCenter Server was patched in June 2024 and was still being actively exploited in January 2026. CISA added it to its Known Exploited Vulnerabilities list on January 23, 2026, requiring federal agencies to remediate within three weeks. 7 months of known exposure after the fix existed.

Mythos does not solve patch latency. It documents it at scale and distributes that documentation to whoever can reach the API endpoint.

The detail everyone is missing or ignoring

The mainstream coverage focuses on Anthropic, the alleged Discord group, and whether a "too dangerous to release" model can be safely contained. Those are legitimate questions. They are also the wrong frame.

The reported pivot point in this incident is not one of the twelve partners, it’s Mercor. A company Anthropic did not select. A company that does not appear on any Glasswing partner list. A company that, until this week, most people in cybersecurity had never heard of.

According to Bloomberg's reporting, corroborated by SiliconAngle, the unauthorized users gained entry through the credentials of a forum member who works for a third-party contractor that evaluates Anthropic models. The group combined those credentials with details from a data breach at AI recruiting and training startup Mercor Inc. to locate the model.

Read that again carefully.

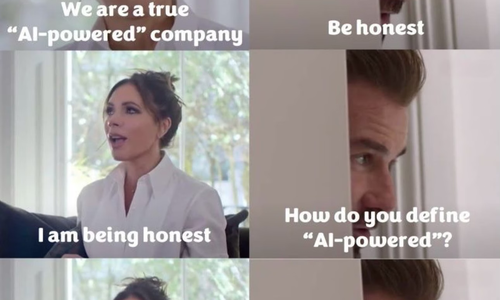

Anthropic spent weeks vetting twelve of the most credentialed technology organizations in the world. It imposed requirements, signed agreements, and constructed an access framework designed to keep Mythos out of the wrong hands. And the alleged access point was a recruiting startup sitting one tier below all of that, holding credentials to the system, with nobody watching.

Ram Varadarajan, CEO of cyber deception technology company Acalvio Technologies, stated: "The Mythos breach didn't require a sophisticated attack. It just required a contractor, a URL pattern and a Day-One guess, which means the 'controlled release' model failed at its weakest link before the model's capabilities were ever the issue. This is the supply chain problem that perimeter-centric security has always underestimated: access controls are a policy, not an architecture and policies fail."

We’ve audited hundreds of AI management systems, architected and deployed cloud infrastructure, lead globally dispersed development teams; and the pattern we see repeatedly is this: the organization tightens its own perimeter while the surrounding vendor tier remains open. The twelve vetted partners were not the problem. The problem was the contractor ecosystem nobody put on the list, holding credentials to the system the list was designed to protect.

While Anthropic controlled who received a Mythos invitation, it didn’t control what Mercor knew, or what a contractor's credentials were worth on a forum.

That is not a gap in one company's security posture.

That is a gap in the entire model.

Who is next

Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley are reportedly testing Mythos. Treasury Secretary Scott Bessent convened a meeting of senior American bankers in Washington in April to discuss the model.

Good. Financial institutions need better security tools. JPMorgan knows firsthand what nation-state-level breach exposure means: a 2014 cyberattack compromised data from 76 million households and 7 million small businesses, running undetected for months before discovery. This breach was made from an employee’s computer and started with a single server that lacked two-factor authentication. JPMorgan now reports facing 45 billion hacking attempts per day.

Here is the problem. These institutions are receiving access to the world's most capable AI vulnerability scanner while actively failing at the fundamentals.

Giving Mythos to institutions that are still losing to unpatched servers and contractor credential theft won’t make those institutions safer. It only gives them a more detailed map of their own exposure, along with a new and more attractive target for every threat actor who reads the same Bloomberg article you probably just read.

The question isn’t about systemic financial risk in the abstract. The question is more concrete: if these institutions can’t secure the third-party vendors sitting below their own vetted perimeters, and the Mythos incident is proof that nobody can, what exactly is the plan for protecting the vulnerability data Mythos generates about their systems?

Because that data, in the wrong hands, is not a cybersecurity tool. It is a targeting list.

What checkbox theater looks like at this scale

Anthropic is valued at approximately $800 billion. It committed up to $100 million in usage credits and $4 million in direct donations to open-source security organizations to launch Project Glasswing. It briefed senior officials across the U.S. government on Mythos Preview's full capabilities, including both its offensive and defensive cyber applications, through ongoing discussions with CISA and the Center for AI Standards and Innovation.

And Bloomberg is now reporting a possible breach through a recruiting startup's data and a URL pattern guess, on the day of launch.

I have a term for the gap between what an organization's security documentation claims and what its actual risk posture delivers: checkbox theater. The compliance artifacts are real. The certifications are valid. The policies are written, approved, and filed. And then Bloomberg receives screenshots and a live demonstration of alleged access to your most restricted model.

Project Glasswing may turn out to be the most expensive instance of checkbox theater in the history of artificial intelligence. Not because Anthropic's intentions were wrong. Because the governance model it deployed, identity-based access control, organizational vetting, and voluntary safety commitments, is structurally insufficient for a capability of this magnitude. The breach data from its own partner list makes that case before the Mythos investigation is even complete.

What you should demand

Voluntary AI safety commitments are not holding. This is not a fringe argument. It is the empirical conclusion of a dataset Anthropic assembled by choosing its partners.

Policymakers need to stop asking whether AI companies are being responsible stewards of powerful models and start asking what architecturally verifiable controls exist to enforce those commitments. "We vetted our partners" is not an architecture. "We restricted access to trusted organizations" is not a security model. As Acalvio's CEO stated directly: "access controls are a policy, not an architecture and policies fail."

AI training infrastructure, the contractors, evaluators, and data providers surrounding foundation model development, needs to be treated as critical infrastructure supply chain. The same vendor risk requirements applied to Glasswing partners must apply to every organization in the contractor tier beneath them. The Mercor situation, if confirmed, is not a one-time oversight. It is a category of risk present across every advanced AI model in development today.

The XZ Utils backdoor is the historical warning sign the industry did not hear loudly enough. A nation-state spent two years cultivating a fake identity inside open-source infrastructure to insert a backdoor into SSH authentication. It was caught by accident. That same approach, patient identity-based infiltration of trusted adjacent infrastructure, is now the documented playbook. AI training contractors are the new high-value target. They are not being defended at that level.

Logan Graham, who leads offensive cyber research at Anthropic, said publicly that other AI companies, including OpenAI, are already working on models with capabilities similar to Mythos Preview, and that the window before such capabilities are broadly available could be as soon as six months or as far out as eighteen months. That is the real clock in this story. Not the hours it allegedly took to access Mythos. The six to eighteen months before every threat actor on the planet has equivalent capability, and the world is still patching at the pace of months.

I’ve argued for years that the credibility gap between what technology vendors claim and what their actual security posture delivers is not a flaw in the AI governance environment. It is the operating condition. The possible Mythos breach, and the 44 incidents documented across its 12 launch partners, is that argument with a case study attached.

The question now is whether policymakers treat this as a turning point or a footnote in the next voluntary guidance document.

Based on what we’ve seen across hundreds of audits and in the rooms where these decisions get made, I already know which one it will be without sustained public pressure.

That is why you are reading this.

This article will be updated as Anthropic's investigation produces findings. Last updated: April 23, 2026.

Share this article

Related Articles

The Reskilling Illusion: When AI Transformation Means "You're Fired"

Oct 03, 2025