The Playbook Has Not Changed. Only the Zip Code Did.

Meta is logging your keystrokes. It is capturing your mouse movements. It is taking screenshots of your screen while you work. And the man now running that operation built his entire career on the same principle: human behavior is a resource to be extracted, and the less power the humans have, the cheaper the extraction.

His name is Alexandr Wang. He is Meta's Chief AI Officer. He is 29 years old. He is worth an estimated $3.2 billion. And he’s doing exactly what he has always done.

If that surprises you, you have not been paying attention or reading the right reports.

The Origin Story They Keep Wunderwashing

There is a word for what the tech press does to people like Alexandr Wang. I call it wunderwashing. Take one young prodigy, add immigrant parents, a famous institution, a dropout moment, and a record-breaking valuation. Viola! Print the same story hundreds of times until the narrative calcifies into mythology. Then watch as that mythology does the quiet work of making accountability feel almost rude.

Wang's wunderwash is one of the most complete in Silicon Valley history.

He was born in Los Alamos, New Mexico. His parents were Chinese immigrants who worked as physicists at the Los Alamos National Laboratory, the site where the Manhattan Project was executed. He competed in national math and coding competitions in sixth grade. He graduated high school a year early. He enrolled at MIT to study mathematics and computer science, then dropped out at 19 to start Scale AI through Y Combinator in 2016.

By 2021, he was the world's youngest self-made billionaire at 24. Forbes 30 Under 30. Time 100 AI. Davos. Congressional briefings. The Fortune profile. The TED talk. Then the Fortune profile again.

Each retelling added another coat. Each coat made the next hard question easier to skip.

Here’s the question the wunderwash was designed to drown out. What did it cost to build?

Scale AI's business model wasn’t complicated. The mathematical models powering AI tools require vast amounts of accurately labeled data. Someone has to label it. Wang built the infrastructure to make that labor as cheap as possible, at the highest possible volume, in the jurisdictions where workers had the least ability to push back.

That is not an inference. No, it’s the documented record.

What Scale AI Actually Built

Scale AI established Remotasks in 2017, a crowdworking subsidiary to supply labeled data for machine learning, with operations across Southeast Asia and Africa.

Here is what those operations looked like:

- In the Philippines, workers labeling data for Scale AI earned far below the country's minimum wage. Some annotation task payments dropped to less than one cent due to what Scale AI described as "vicious competition" after the platform expanded to India and Venezuela. Late payments were reportedly commonplace, and some workers received only a small fraction of their promised compensation.

- In Kenya, workers earned between $1.32 and $2 an hour compared to $20 an hour for their American counterparts performing identical work. The unemployment rate among young Kenyans runs as high as 67%, which meant workers had few alternatives.

- An Oxford Internet Institute study in 2022 found that Remotasks met the minimum standards of fair work in only 1 out of 10 criteria. Yes, 1 out of 10.

- Workers weren’t just underpaid, many were required to review and label violent, graphic, and traumatic content with no mental health support. Kenyan workers described the experience as producing depression, anxiety, recurring intrusive thoughts, and what multiple workers called "modern slavery.”

- In early 2024, nearly 100 Kenyan data laborers employed by companies including Scale AI wrote an open letter to President Biden. The letter stated their working conditions amounted to modern-day slavery. And what did Alexandr do? Well, Scale AI responded in 2024 by dismantling their union.

- In March 2024, Scale AI's Remotasks subsidiary abruptly shut down operations in Kenya, communicating the closure through a last-minute email sent hours before exit, leaving thousands of workers without income or recourse.

Philip Alchie Elemento, a former Scale AI tasker in the Philippines, described the dynamic directly: "Scale AI can exploit Filipino workers because they know we don't have a choice."

The Legal Record While the Deals Were Closing

This is not old history. The litigation was active while Meta was negotiating the acquisition.

In December 2024, Scale AI was sued by a former employee alleging wage theft and worker misclassification. In January 2025, a second former employee filed an identical suit. Also in January 2025, contractors sued Scale AI alleging psychological harm from being required to process disturbing content during model training.

That same month, the U.S. Department of Labor opened an investigation into Scale AI for compliance with the Fair Labor Standards Act, covering unpaid wages, misclassification of workers as contractors, and illegal retaliation. The investigation was ongoing as of the reporting date.

Wang is named as a defendant in the class-action case, accused of knowingly breaking labor laws, along with other Scale AI executives.

Three lawsuits.

A federal labor investigation. A documented pattern of exploitation across multiple countries and years.

Meta closed a $14.3 billion acquisition and handed Wang the title of Chief AI Officer.

That wasn’t an oversight. That was an intentional choice.

How He Bought His Protection First

The labor violations explain what happened to Scale AI's workers, but the political strategy explains why nothing happened to Scale AI.

Wang did not wait for scrutiny to arrive before building relationships in Washington. He constructed them proactively and with precision.

In the years before the Meta deal, Wang hosted a policy summit at the Montage Deer Valley in Utah, alongside longtime Scale AI investor Nat Friedman. Senior Pentagon officials attended. One attendee told Fortune the event appeared to be "a bit of a sales event to show off to investors and government customers." Another described it as a "fairly transparent effort to ingratiate himself with the national security establishment."

That summit was wunderwashing with a security clearance. The formula was identical to what the tech press had been running for years. The story shifted from youngest billionaire to national security visionary. The mechanism stayed the same but the mythology got a new cape.

Wang testified before the House Armed Services subcommittee in July 2023, speaking on AI challenges in national security. He briefed the Select Committee on the Chinese Communist Party twice. He cultivated relationships with members of Congress across both parties, with one lawmaker publicly calling him "a real friend."

He attended Donald Trump's inauguration in January 2025, alongside other tech executives.

His former managing director at Scale AI, Michael Kratsios, is Trump's nominee as director of the White House Office of Science and Technology Policy, the president's chief technology advisor.

The Department of Labor investigation into Scale AI was open while Kratsios was being nominated. The man potentially overseeing U.S. technology policy used to run the company being investigated.

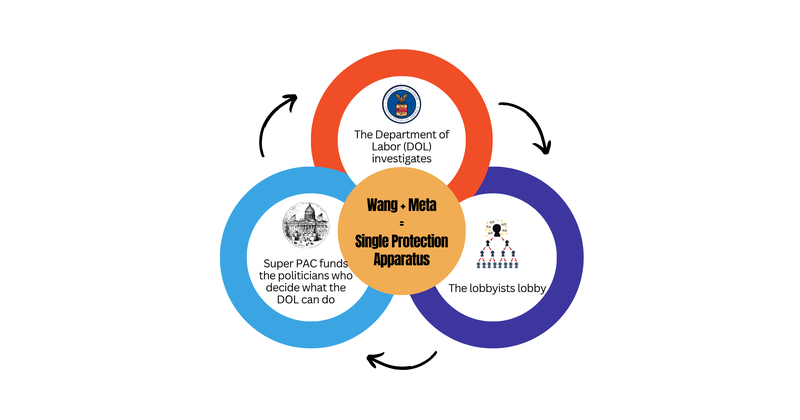

Then Wang walked into Meta, and the individual protection network met an institutional one.

Meta spent $26.3 million on federal lobbying in 2025, according to OpenSecrets. That’s not a typo. The company now employs roughly one lobbyist for every six members of Congress, according to Issue One. And of those lobbyists, 85% are revolving door hires who previously worked in the legislative or executive branches of government.

In September 2025, while Wang was settling into his role as Chief AI Officer, Meta launched a super PAC called the American Technology Excellence Project. The company pledged tens of millions of dollars to elect state candidates from both parties who support AI development and oppose AI regulation. More than 1,000 AI-related bills were introduced in all 50 states during the 2025 legislative session alone. Meta's PAC exists to bury them.

Wang framed his ambitions through his childhood geography: "Knowing about the history of where I grew up and the impact that Los Alamos and the Manhattan Project had on Pax Americana and the global order, it felt so clear that great AI technology needs to be applied to national security problems."

That framing worked.

- Scale AI secured $110 million in contracts with the U.S. Air Force and Army.

- In September 2025, the DoD awarded Scale AI a $99 million contract for Army research and development.

- The Pentagon's Chief Digital and Artificial Intelligence Office selected Scale AI to test and evaluate large language models for military planning.

- In March 2025, the Defense Innovation Unit awarded Scale AI a contract for Thunderforge, the Department of Defense's flagship program to integrate AI into military planning and operations, including planning movements of ships, planes, and other assets. The program also included Anduril and Microsoft.

- Scale AI and Meta previously developed a product called Defense Llama, a large language model with military applications.

What Wang built at Scale AI through personal relationships, Meta was already building through institutional capital. Together, the individual access and the corporate firepower operate as a single protection apparatus.

The Department of Labor (DOL) investigates.

The lobbyists lobby.

The super PAC funds the politicians who decide what the DOL can do.

The national security persona, built through summits and congressional briefings, neither earned nor incidental to Scale AI's business. At Meta, it is now load-bearing infrastructure.

The Connection That Changes Everything

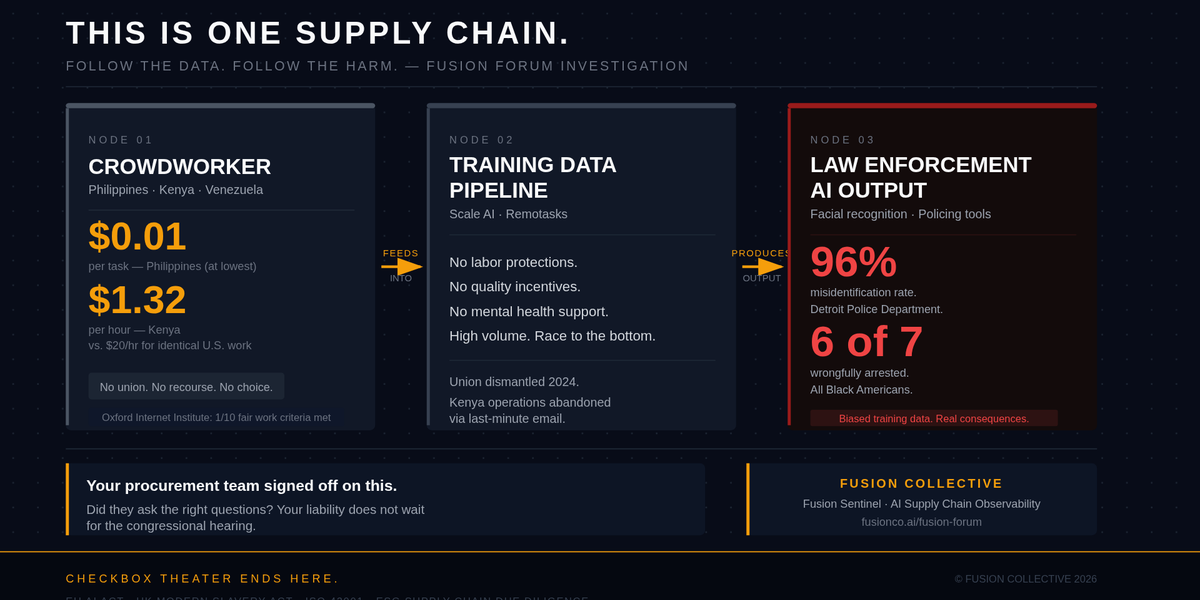

Most coverage of Scale AI focuses on labor exploitation in isolation. Most coverage of facial recognition bias focuses on the police departments using the tools. But these two stories haven’t been told as a single supply chain.

And they should be.

Scale AI is not a facial recognition company. That distinction has protected Wang's reputation for years. But the distinction is narrower than it appears.

Scale AI's core business is labeling training data. AI systems, including those deployed in law enforcement contexts, learn from that labeled data. The quality, the composition, and the demographic representation of that training data determines what the model gets right and what it gets catastrophically wrong.

Here is what the downstream record shows:

A 2019 study by the National Institute of Standards and Technology, which tested 189 facial recognition algorithms from 99 developers, found that Black and Asian people were between 10 and 100 times more likely to be misidentified than white men, linked directly to underrepresentation in training datasets.

At least 7 Americans have been wrongfully arrested due to facial recognition misidentification. 6 of the 7 are Black.

The former Detroit Police Chief James Craig acknowledged that if officers were to use facial recognition by itself, it would yield misidentifications 96% of the time.

In March 2026, a Tennessee grandmother spent more than 5 months in jail after police used an AI facial recognition tool to link her to crimes committed in North Dakota, a state she says she had never visited.

The pattern is consistent. Biased training data produces biased outputs. Biased outputs go into law enforcement tools. Law enforcement tools arrest Black people who were nowhere near the crime.

The chain runs from a crowdworker in Mindanao earning less than one cent per task, through a data pipeline, into a police department's identification system. Every link in that chain is documented. Scale AI operated one of the most significant links. The workers producing the data had no job security, no labor protections, no quality incentives beyond raw task volume, and no ability to organize after the union was dismantled.

You can’t build reliable, unbiased AI training data on an exploited, unprotected, high-volume workforce racing to complete tasks at one cent each.

The business model and the output bias are not separate problems.

They’re the same problem.

Now He’s Running the Same Play at Meta

Wang joined Meta as Chief AI Officer in June 2025, leading the newly formed Meta Superintelligence Labs.

Meta simultaneously announced plans to cut approximately 8,000 employees, roughly 10 percent of its total workforce, with the first layoffs scheduled for May 2026. The company generated $201 billion in revenue in 2025. This is not a cost problem. It is a resource reallocation decision.

Meta is now logging the mouse movements, keystrokes, clicks, and screenshots of its U.S.-based employees to generate training data for AI models. Reuters reported that the program runs in the United States specifically because European privacy laws would not permit it.

The strategy is identical to what Wang executed at Scale AI. Find the jurisdiction where the workers have the least legal protection. Extract their behavioral data. Use it to train the models that will eventually replace them.

The workers changed. The logic did not.

Ed Zitron, a prominent technology critic, described Meta's internal environment as a "culture of paranoia." That framing is accurate but incomplete. What Meta employees are experiencing is not paranoia. Paranoia is an irrational fear of something that may not exist. The surveillance is real. The layoffs are confirmed. The person designing the strategy has a documented track record of treating workers as data sources until they are no longer needed.

What the Industry Keeps Getting Wrong

Most AI ethics commentary treats worker exploitation in the Global South and employee surveillance in Silicon Valley as separate issues requiring separate solutions.

This is for the folks all the way in the back. They are not separate. They are the same extraction model operating at two different price points.

Global South workers are paid $2 an hour to label data, review traumatic content, and annotate training sets with no benefits, no job security, and no legal protection. AI companies maintain distance from these conditions through a network of subcontractors and subsidiaries.

U.S. technology workers are having their professional behavior logged without meaningful consent, while their employer plans layoffs funded by the AI systems being trained on their own keystrokes.

They’re doing it at home now; just gave it a different name.

What You Should Do With This Right Now

If you are a technology leader, a board member, a procurement officer, or anyone responsible for how your organization buys and deploys AI, you have a sourcing question in front of you today.

- Do you know how the training data in your AI systems was generated?

- Do you know what workers were paid to produce it?

- Do you know whether your vendors chose their labor markets specifically because the legal protections there were insufficient to enable workers to demand better conditions?

If you cannot answer those questions, you are not in compliance with your own stated values on responsible AI. Moreover, you may not be in compliance with the supply chain due diligence requirements that regulators in the EU and UK are formalizing right now.

- The EU AI Act carries supply chain implications.

- The UK Modern Slavery Act applies to global supply chains above a revenue threshold.

- Investors in ESG-focused funds are beginning to ask about AI labor provenance with the same rigor they apply to conflict minerals.

- ISO 42001, the international standard for AI management systems, directly addresses supply chain accountability.

The documented pattern at Scale AI, labor exploitation in low-regulation jurisdictions, worker misclassification, psychological harm without support, biased training outputs, is exactly the audit trail a competent AI governance engagement should surface before your organization deploys a system. Not after the congressional hearing.

Most organizations using AI systems touched by Scale AI's data infrastructure have no documented evidence that they asked these questions at procurement.

And that liability is in YOUR supply chain right now.

The Line Everyone Should See

There is a straight line from a worker in Mindanao earning less than one cent per task to a software engineer in Menlo Park whose keystrokes are being logged to train the model that replaces her, to a Black man in Michigan arrested in his driveway because an algorithm trained on compromised data “said” it was him.

Alexandr Wang didn’t invent this dynamic. But what he did do was read it accurately (or at least hung around Thiel and acolytes long enough to guess), built a company around it, sell it for $14.3 billion, and then got hired to run the next version at a larger scale with more political protection than before.

The zip code changed but his playbook did not.

The question is not whether this will continue. It will, until the cost of continuing exceeds the cost of stopping. The real question always comes back to this: who bears that cost, and whether your organization is part of the system creating it or part of the one that ends it?

Checkbox theater will not get you there.

Share this article

Related Articles

The Reskilling Illusion: When AI Transformation Means "You're Fired"

Oct 03, 2025