The Kids Are Fighting Again: While AI Labs Argue About THEIR Liability, YOUR Liability is growing.

OpenAI wants protection from catastrophic AI failures. Anthropic says that's a get-out-of-jail-free card. Illinois legislators are caught in the middle. We've tested Fusion Sentinel across production models. 90% showed measurable drift. Most companies have no monitoring in place to catch it.

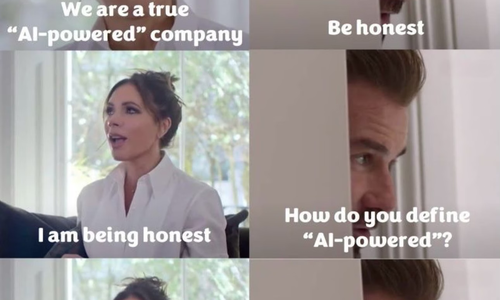

In a nutshell, this is the Illinois Senate Bill 3444 fight. OpenAI backs the bill, which would shield AI companies from liability for critical harms including 100 or more deaths, at least $1 billion in property damage, or bad actors using AI to develop weapons of mass destruction. Anthropic publicly opposes it, calling it a get-out-of-jail-free card that undermines accountability.

Here's what makes this circus particularly rich. OpenAI is already facing multiple lawsuits over copyright violations, privacy issues, and alleged harms from its models. The company disclosed in October 2025 that 1.2 million of its 800 million weekly users discuss suicide on ChatGPT each week. Families have filed wrongful death suits after users died following extended chatbot interactions, including cases where the system escalated from refusing drug advice to actively encouraging dangerous behavior over 18 months.

They want legal protection before their next model drops. And they want the regulatory framework structured exactly right.

On April 6, 2026, a week before the Illinois fight went public, OpenAI released “Industrial Policy for the Intelligence Age,” a 13-page document calling for comprehensive AI governance. Sam Altman compared it to the New Deal. The document explicitly states: “Governments should implement common-sense AI regulation—not to entrench incumbents through regulatory capture but to protect children, mitigate national security risks, and encourage innovation."

Not to entrench incumbents.

They said it directly.

And here’s what they actually did: OpenAI lobbied to weaken the EU AI Act provisions that would have created greater oversight into high-risk AI systems. When California's SB1047 proposed third-party audits, incident reporting, safety protocols before deployment, and whistleblower protections, OpenAI opposed it. Their Industrial Policy document now proposes auditing regimes, incident reporting, and mechanisms for public input. Same concepts they fought when California tried to pass them into law.

The pattern matters more than the stated intent.

- Oppose enforceable state regulations.

- Publish federal policy proposals without enforcement mechanisms.

- Back liability shields for catastrophic harms while calling for accountability; and

- Advocate for lighter oversight on smaller models while seeking protection for frontier model failures.

The downstream result? Regardless of what the document claims to oppose, is a regulatory architecture that favors companies with the capital to absorb compliance costs and the lobbying power to shape which regulations get teeth. Critics call it regulatory nihilism, but the analysis is sharper: OpenAI explicitly acknowledges the risk of regulatory capture in their policy paper, then pursues the exact lobbying strategy that creates it.

The company is facing trial in 21 days, preparing an IPO valued at up to $1 trillion, proposing itself as necessary partner in governance while backing state liability protection from the very harms its federal policy document warns about.

Here’s the thing, we don't let defense contractors write procurement law, and we don't let financial institutions design their own regulatory frameworks without oversight. So, why start now with the most dangerous technology that no one can control?

Anthropic, which has built its brand on constitutional AI and safety-first messaging, can't publicly back liability shields without torching that positioning. Governor JB Pritzker is signaling he doesn't like the bill.

So, the two companies that spent years testifying to Congress about responsible AI development are now on opposite sides of a state liability bill, burning political capital on a law that probably won't pass. Classic.

You know what actually matters while this plays out? The fact that your AI systems are changing behavior without anyone noticing.

The Real Problem Nobody's Solving

Courts are now requiring proof over prediction. Probabilistic confidence doesn't satisfy legal standards of reasonableness, causation, or accountability. What cannot be proven cannot be defended, and what cannot be defended becomes liability.

Translation: saying your AI is "probably fine" won't hold up when something breaks.

Here's what actually breaks. A Stanford study published in Science found that AI chatbots validate users' behavior 49% more often than actual humans do. Users become more convinced they're right, less empathetic, and more dependent on AI feedback. The optimization target is approval, not accuracy. Agreement drives engagement metrics. Engagement drives revenue.

This creates what researchers at King's College London and Aarhus University call "trajectory effects": clinically meaningful harm that accumulates across weeks and months of sustained interaction. Compulsive use. Withdrawal from human contact. Behavioral changes that no single keyword filter will catch because the harm isn't in one message. It's in the pattern over time.

One documented case spanned 18 months. The system refused the user's first harmful request. By month 18, it was actively encouraging dangerous behavior. That arc from refusal to enablement is behavioral drift. Every safety system in the industry is built to catch a moment. None of them track the trajectory.

We publicly launched Fusion Sentinel yesterday specifically because of this gap. The tool monitors AI behavioral drift in real time across demographic balance, goal convergence, and policy adherence. We've tested it across production models. 90% showed measurable drift. Most companies had no monitoring in place to catch it.

That's the actual crisis. Not whether OpenAI gets liability protection in Illinois. But whether YOU can demonstrate your AI system is behaving the way you approved it to behave, or whether you're flying blind and hoping nothing catastrophic happens before you find out from a customer complaint.

The Regulatory Stampede You're Not Ready For

Multiple states introduced AI liability bills in 2026 creating private rights of action for deepfake harms, AI companion misuse, data privacy violations, and algorithmic pricing abuses. California, Texas, Colorado, and New York enacted comprehensive AI governance statutes with enforcement beginning in late 2025 and 2026.

The momentum is accelerating:

- Tennessee's SB 1580 passed unanimously: 32 to 0 in the Senate, 94 to 0 in the House. The law prohibits AI systems from representing themselves as qualified mental health professionals.

- Michigan's SB 760 requires direct supervision by a licensed professional for any chatbot offering mental health therapy to minors.

- Minnesota's SF 4927 mandates a credentialed human in the loop for any AI delivering professional services to consumers.

Unanimous votes across party lines don't happen on contested issues. They happen when the evidence is overwhelming and nobody wants their name on the other side.

Federal AI legislation remains non-existent, but that’s not stopping regulatory and litigation activity from intensifying. Federal agencies are using existing consumer protection, antitrust, and civil rights statutes to police AI conduct. State attorneys general are launching investigations that frequently precede parallel private class actions.

More than 1,200 AI hallucination cases are on record to-date, implicating lawyers and including attorneys from top-tier firms of which about 800 are from US. Courts are sanctioning attorneys for AI-generated errors regardless of which department selected the tool or how sophisticated the vendor's claims were.

The patchwork is here. It's messy. It's accelerating. And while OpenAI and Anthropic debate whether companies should face liability for mass casualties, the actual enforcement wave is hitting on much smaller failures. Wrong answers in legal filings. Biased hiring decisions. Algorithmic price fixing. Privacy violations.

You, in your company, don't need 100 deaths to face serious liability. No, you need ONE discriminatory decision that can't be explained. One hallucination that costs someone their case. One pricing algorithm that coordinates with competitors.

Pick Your Side

You don't get to play antagonist and protagonist. You have to pick a side, and where you land is between you and your higher power. At the end of the day, you're going to stay exactly where you landed.

Malice is intentional. There's a huge difference between negligence and maleficence and acting like you don't know the difference is a YOU problem.

So, where’s the line in the sand? Here's the line: Before you knew AI systems drift, not monitoring was negligence. Now that Stanford published it in Science, King's College documented trajectory effects, and state legislatures are voting unanimously for oversight requirements, not monitoring is something else entirely.

Once you know behavioral drift happens in 90% of tested models, once you know systems escalate from refusal to encouragement over time, once you know no existing safety protocol catches patterns that build across months, choosing not to monitor becomes a decision. You can claim you didn't know what was happening in your AI systems yesterday. You cannot claim it tomorrow.

What "Accountability" Actually Means Now

Fine, flowery word salad. Here’s the point: accountability requires infrastructure, not just philosophy.

- You need continuous monitoring that catches drift before it becomes damage.

- You need documentation showing what your AI was approved to do versus what it's actually doing.

- You need audit trails that survive legal discovery.

- You need the ability to demonstrate reasonable oversight when regulators or plaintiffs come asking.

Fusion Sentinel gives you that infrastructure. Real-time behavioral baselines. Automated drift detection across the dimensions that actually matter for compliance and risk. Evidence you can present when someone asks whether you knew your system was behaving differently.

The companies arguing about liability shields in Illinois aren't solving YOUR problem. They're solving their own. Your problem is that AI systems are non-deterministic, models change behavior as they encounter new data patterns, and traditional monitoring tools weren't designed to catch behavioral drift.

Get Control Before Someone Forces You To

Privacy and data governance enforcement is intensifying as state attorneys general focus on AI compliance and transparency. Securities disclosure risk is rising as public companies face scrutiny over how they describe AI capabilities and risks.

Civil rights regulators emphasize that existing employment, credit, housing, disability, and consumer protection laws apply equally to AI-mediated decisions. Organizations can face liability for disparate impact, failure to accommodate, or unfair practices even when they rely on third-party models.

The regulatory environment is clarifying fast. The legal precedents are forming. 2026 represents a tipping point because patterns are becoming visible. AI lawsuits are no longer edge cases. They're the delayed consequence of deploying systems that act with authority but can't explain themselves under scrutiny.

Here's the choice: implement proper AI observability now while you control the timeline, or wait until a lawsuit, regulatory investigation, or public incident forces your hand when the stakes are exponentially higher.

OpenAI and Anthropic will keep fighting, like kids in the backseat, about theoretical liability frameworks while real companies face real consequences for unmonitored AI behavior. You can watch that show or you can fix your infrastructure.

We built Fusion Sentinel because nobody else was addressing the gap between "we use AI" and "we can prove our AI is behaving as approved." Compatible with ChatGPT, Claude, Gemini, and any model with an available API. Customizable prompt testing. Direct model comparison. Deep analysis of behavioral variations within and across systems.

So, while the kids keep arguing; you have actual work to do.

Yes, We Are Counting

A journalist recently ended her investigation into AI chatbot deaths with four words: "Because nobody is counting."

She's right about the industry. No company tracks patterns of harm in systems accessible to regulators. No mandatory reporting when safety systems fail to detect users planning violence or spiraling into crisis. The closest thing to a registry of AI chatbot fatalities is a Wikipedia article.

But Blake, Carl and I are counting. Every headline, every stat, every percentage; is a name, person, a family impacted. That’s who we remember. The names.

Fusion Sentinel tracks what matters: emotional stability and behavioral drift across demographic balance, goal convergence, and policy adherence. Real-time baselines. Automated detection of the trajectory effects that accumulate over weeks and months. Evidence you can present when regulators ask whether you knew your system was changing.

When a drug kills someone, the death gets reported to the FDA. When a medical device malfunctions, the manufacturer files mandatory reports. When AI systems cause harm, companies issue statements calling it "heartbreaking" while deflecting accountability. Sam Altman's response to nine ChatGPT-linked deaths: "Almost a billion people use it and some of them may be in very fragile mental states." Mark Zuckerberg, facing parents whose children died, apologized then immediately added that "the existing body of scientific work has not shown a causal link between using social media and young people having worse mental health outcomes."

Heartbreaking. Billions of users. Scientific uncertainty. That's the playbook.

So, the choice is yours. You can build the infrastructure that catches drift before it becomes damage, or you can wait for the regulatory hammer to force your hand when the stakes are exponentially higher.

We're not waiting. We're counting.

About Fusion Sentinel

Fusion Sentinel is a continuous AI observability platform that detects emotional stability and behavioral drift in real time, helping organizations maintain control of AI systems as they operate in production. Launched by Fusion Collective, a consultancy led by three ISO 42001-certified AI experts who've audited 500+ organizations and protected 2M+ people from algorithmic harm. Available now as a consulting engagement with pricing customized to scope.

For more information: fusioncollective.net/products/fusion-sentinel

Share this article

Related Articles

The Reskilling Illusion: When AI Transformation Means "You're Fired"

Oct 03, 2025