The AI Governance Guide Nobody Built for the $100M Company. Until Now.

Large enterprises have dedicated governance committees, chief AI officers, and million-dollar compliance budgets. Startups move fast and defer governance until they are bigger. Mid-market companies ($50 million to $500 million in revenue) sit in neither lane. They are deploying AI at enterprise scale with neither the governance infrastructure of a Fortune 500 company nor the speed-based justification of a pre-revenue startup. And the frameworks, consulting programs, and content designed to help them are built for organizations ten times their size.

The RSM 2025 Middle Market AI Survey studied 966 organizations across the US and Canada. Ninety-one percent are using generative AI. Ninety-two percent encountered implementation challenges. Seventy percent acknowledged needing external support to maximize AI's potential. Nobody built them a practical governance playbook. This is it.

Why Is Mid-Market AI Governance Different from Enterprise Governance?

The governance gap at the mid-market level is structural, not accidental.

A governance committee requires people to sit on it. A chief AI officer requires a salary that most mid-market operating budgets have not yet approved. The Big 4 consulting engagement that produces an enterprise AI governance framework starts at $500,000 before implementation. That price point ends most conversations and especially this one; before it begins.

The 2025 AI Governance Survey, conducted by Pacific AI across 351 participating organizations, found that familiarity with major governance standards, including the NIST AI Risk Management Framework, sits at 14% for small and mid-market companies, compared to significantly higher rates at large enterprises. Only 36% of small companies have dedicated governance officers, compared to 62-64% at larger firms. Only 41% provide annual AI training to staff.

The EU AI Act, the US AI Executive Order on Safe, Secure, and Trustworthy Artificial Intelligence, and the UK AI Bill do not include a mid-market exemption. The compliance obligations apply at the deployment level, not the company size level.

"91% of mid-market companies use generative AI. Only 36% of smaller companies have a dedicated governance officer. The gap between those two numbers is where liability lives." -- RSM 2025 / Pacific AI 2025

What Are the Four Governance Elements Every Mid-Market Company Needs First?

Governance doesn’t require an enterprise budget. The thing it requires is completeness. These four elements represent the minimum defensible position for a mid-market organization deploying AI at scale.

An AI inventory. You can’t govern what you can’t see. To start, map every AI system in use across your organization. For each system: record the vendor’s name, the data it processes, who authorized the deployment, and what the system is used to decide. This document literally changes the internal governance conversation within 30 days of completion, because organizations consistently discover deployments and uses, they didn’t know existed.

A risk classification. The EU AI Act categorizes AI systems into four tiers: minimal risk, limited risk, high risk, and unacceptable risk. High-risk systems, specifically those used in employment decisions, credit scoring, healthcare, and educational assessment, require documented controls, human oversight mechanisms, and incident logging under current law. Run your AI inventory through this classification. The exercise surfaces regulatory exposure that most organizations haven’t identified.

A written incident response protocol. Not a policy statement. A protocol: who is notified within what timeframe, what data is preserved, which regulators require notification and by when, who communicates externally.

At AWS, the rule I lived by with every development team I lead was simple: you can't scale what you can't replicate. Workflows nobody wrote down. Architecture nobody documented. Codebases only two people understand. That's not a product. That's a dependency.

Your incident response process works exactly the same way. If it lives in someone's head, it’s not a plan. It’s a single point of failure with a job title. The Pacific AI 2025 AI Governance Survey found that 46% of organizations with formal AI usage policies have no incident response playbook. That means nearly half of you are one resignation, one vacation, or one 2 a.m. breach notification away from discovering what an undocumented process actually costs. A policy is a statement. A playbook is a plan. Write the playbook and operationalize it, tabletop it more than once a year, more than quarterly so it becomes a natural response. Not something someone needs to find, then remember and then execute – flawlessly.

A documented monitoring schedule. AI models drift over time, performing differently. The Deloitte 2026 State of AI report, which surveyed 3,235 leaders across 24 countries, found that organizations where senior leadership actively shapes AI governance achieve significantly greater business value than those that delegate governance entirely to technical teams. Document your monitoring reviews. Sign them. File them. That record is evidence of ongoing reasonable care.

Is ISO 42001 Certification Necessary for a Mid-Market Company?

The answer depends on one specific variable: who buys from you.

If your customers include enterprises, government agencies, healthcare systems, financial services firms, or educational institutions, the answer is yes. Enterprise procurement is systematically adding AI governance documentation as a vendor qualification criterion. ISO 42001, the international standard for AI management systems published by the International Organization for Standardization, is the independently verified proof that your governance practices have been audited by an accredited certification body. The Responsible AI Institute, which administers ISO 42001 certification programs, reports that certification requirements are appearing with increasing frequency in enterprise AI procurement criteria.

If your current customer base doesn’t require certification right now, the ISO 42001 framework is still the most rigorous publicly available structure for building governance that holds up under regulatory scrutiny. Building against that framework now costs a fraction of rebuilding after an incident.

What Is the Most Common Governance Mistake at the Mid-Market Level?

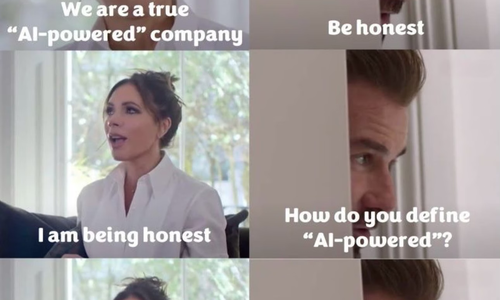

The most common governance failure at this scale is not a catastrophic decision. It’s a reasonable-sounding assumption that doesn’t hold up when tested.

The assumption is: 'We vetted this vendor. The contract looks standard. We are covered.'

What that assumption misses: AI vendors provide models and infrastructure. Deployment decisions, use case selection, data inputs, and output review belong to the deploying organization. The liability for those decisions belongs there too. Microsoft's Copilot Terms of Use state this explicitly. Atlassian's AI Terms state this explicitly. Most enterprise AI agreements contain equivalent language.

The EEOC settled its first AI employment discrimination case in August 2023 against iTutorGroup, which had programmed its hiring software to automatically reject applicants above certain ages. The settlement was $365,000. The precedent was far more expensive: every employer using AI in hiring now operates under established EEOC enforcement authority. In May 2025, Mobley v. Workday was certified as a class action, establishing that AI vendors can face direct liability as agents of the employers that deploy them.

"The EEOC settled its first AI hiring discrimination case in 2023. Mobley v. Workday was certified as a class action in May 2025. Mid-market companies are not exempt from this precedent."

Where Does a Mid-Market Company Start?

The governance infrastructure that makes a mid-market company defensible doesn’t require an enterprise budget. It requires three things:

- An honest inventory of every deployed AI system

- A structured risk assessment against the EU AI Act classification framework; and

- A documented incident response protocol that specifies what happens when something goes wrong.

Fusion Collective does not start with a template and work backward. We start with you — where you actually are right now, not where a proposal assumed you would be six months ago.

That means governance programs sized for the actual structure, budget, and risk profile of where your company stands today. A startup. A $5 million company. A $50 million company. A $500 million company. The starting point does not matter. What matters is that the work is real, built from hundreds of actual AI system audits, and never cut from enterprise cloth that does not fit you.

We are a group of people who believe the hardest, most expensive path is often the right one and who will walk away from business before we agree to deliver something short of world-class. That is not a positioning statement. It’s how we decide what to take on and what to turn down.

ISO 42001 certification is available for organizations whose customer base or procurement pipeline requires it. For everyone else, we build governance that holds up when it matters, not governance that looks good in a 50-page deck ultimately used as a coaster.

Ninety-one percent of your peers are already using AI. The ones that win contracts, retain talent, and stay out of regulatory enforcement headlines are building governance infrastructure before they need it.

Start building your governance program at www.fusioncollective.net

Share this article

Related Articles

The Reskilling Illusion: When AI Transformation Means "You're Fired"

Oct 03, 2025