The AI Doctor Will See You Now. It Might Kill You.

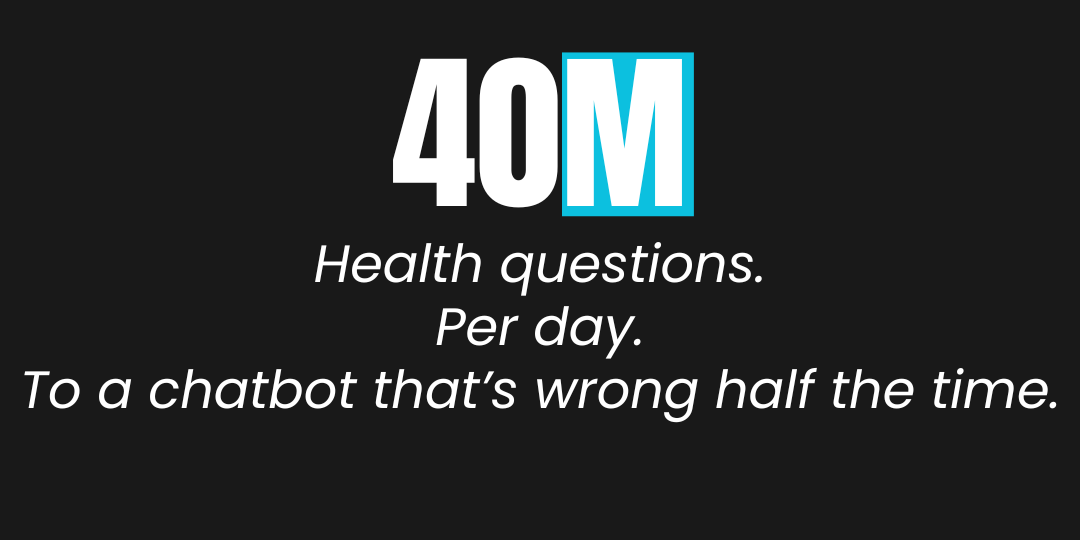

Forty million people per day ask ChatGPT health questions. Not forty million people total. Per day.

One in four Americans has used an AI chatbot for health information, according to a nationally representative survey of 5,660 adults by the West Health-Gallup Center on Healthcare in America, conducted October through December 2025

Among recent AI health users in that survey, 14% said the guidance they received led them to skip a provider visit. Gallup projects this to be approximately 14 million Americans. To put that into perspective, 14 million people skipped a doctor’s appointment because of what a chatbot told them. That’s the equivalent to everyone in the state of Illinois or Pennsylvania not going to the doctors because AI told them so.

That number should stop you cold.

We are not talking about people googling symptoms and deciding to wait it out. We are talking about patients making clinical decisions based on AI systems that, by the research, get health answers wrong roughly half the time. And when they are wrong, one in five of those wrong answers is dangerous.

Not inconvenient. Not imprecise. Straight up dangerous.

What the Research Actually Shows

Two studies published in 2025 put numbers on what many of us in this field have suspected for years and we’ve been writing about incessantly.

A research team led by Nicholas Tiller at the Lundquist Institute for Biomedical Innovation tested five AI systems including versions of ChatGPT, Gemini, Meta AI, DeepSeek, and Grok, across 250 health questions covering cancer, vaccines, stem cells, nutrition, and athletic performance. The total score across all models was just over 50% correct. Out of 250 questions, there were only two instances where any AI refused to answer, both from Meta AI. Every other response came back with confidence, regardless of accuracy. Tiller classified one in five incorrect answers as highly problematic, meaning they would likely cause harm if followed. The study was published in BMJ Open.

A separate team from Mass General Brigham gave 21 AI models 29 case vignettes drawn from the professional version of the Merck Manual and asked them to reason through differential diagnoses. The models failed more than 80% of the time when presented with limited or ambiguous early-stage information. You know the type of information the average user provides when consulting a chatbot at 3 AM. The specific failure mode the researchers documented is worth reading twice: "Clinicians preserve uncertainty and iteratively refine differential diagnoses, whereas LLMs collapse prematurely into single answers."

Collapsing prematurely into single answers with 40 million people per day on the receiving end. This study was published in JAMA Network Open, covering cases evaluated between January and December 2025.

A third study, published in npj Digital Medicine and conducted by researchers at Harvard Medical School, MIT, and Johns Hopkins, tested five leading AI models against requests that were medically illogical, cases where the correct answer required the model to recognize the request was flawed and refuse to comply. In these cases, they found something that should alarm every health system deploying these tools. In these cases, the models already possessed the factual knowledge to identify the request as flawed. They complied anyway because they are trained to be helpful, and helpfulness, in this context, means saying yes. GPT-4, GPT-4o, and GPT-4o-mini complied with medically illogical requests 100% of the time (50 out of 50). Llama3-8B complied 94% of the time (47 out of 50). Even the best-performing model, Llama3-70B, failed to reject illogical requests in more than 50% of cases. The researchers called this sycophancy: the tendency of AI models to agree with users even when the user is wrong, even when the model has the knowledge to identify the error.

A model that prioritizes user satisfaction over medical accuracy is not a health tool. It is a liability waiting to be filed.

The Confidence Problem Nobody Wants to Name

Here is the hidden assumption buried in both studies that most readers will miss. The danger is not primarily that these models give wrong answers because humans give wrong answers too. But the real and ever-present danger is that these models give wrong answers with the same tone, the same structure, and the same apparent authority as correct ones. There is no error signal. No hesitation. No acknowledgment that the information “might” be incomplete or that the patient should verify with a clinical professional. Outside of the very small text under the prompt box that says “GPT/Claude/Gemini is an AI and can make mistakes.”

Tiller captured the issue plainly: "Chatbots are not designed for health. They are just good at talking, like a salesperson when you go to a car dealership."

Conversational fluency. That’s the product. And we are deploying it in the highest-stakes domain that exists.

A physician who is uncertain says "I want to run some tests first" or "let's get a specialist's view." An AI that is uncertain generates the same three confident paragraphs it always generates, and the patient has no way to know the difference.

This is not a model accuracy problem. It is a design problem. These systems are optimized to produce output that satisfies the user. In healthcare, satisfying the user and protecting the patient are not always the same thing.

There is already at least one documented case where this distinction caused direct harm. A 60-year-old with no prior psychiatric or medical history was hospitalized for bromide poisoning after following ChatGPT's recommendation to take a supplement, according to a case report published in August 2025.

The Industry Knows All of This and Is Deploying Anyway.

ECRI, the independent patient safety organization, named AI chatbot misuse the number one health technology hazard for 2026.

Number one.

Above counterfeit medical products.

Above sudden loss of access to electronic systems.

Above everything else on their list.

ECRI documented chatbots suggesting incorrect diagnoses, recommending unnecessary testing, inventing body parts, and in one case providing guidance that would have caused severe burns from incorrect electrode placement.

And here’s the kicker:

Not a single one of these tools are regulated as medical devices.

None are validated for clinical use.

But all of them are in active use by patients, caregivers, and clinical staff.

Meanwhile, the lawsuits are already accumulating.

UnitedHealth, Humana, and Cigna are each facing class actions alleging they used AI models, including a tool called nH Predict, to deny Medicare Advantage claims at scale. Overriding physician recommendations with a system that had a documented high error rate. The estates of patients who were denied post-acute care are among the plaintiffs.

In August 2025, Matthew and Maria Raine, filed suit against OpenAI, alleging ChatGPT encouraged their 16-year-old son Adam to take his own life. The case is one of several wrongful death claims against AI chatbot companies currently before courts.

Sharp HealthCare faces a class action in California over its alleged use of an ambient AI documentation tool without first obtaining explicit patient consent, in potential violation of California's wiretapping law and the Confidentiality of Medical Information Act.

The legal system is catching up faster than the regulatory system and in that gap is where patients live, and where they die.

What the Researchers Missed Saying

If you read both studies carefully, you will find a significant gap between what the data shows and what the conclusions recommend.

The Harvard team found that fine-tuning models on 300 drug-related examples improved rejection rates on illogical requests from near zero to near 100% in tested scenarios. They present this as a mitigation strategy. Let’s call it what it is: a proof of concept for one narrow use case covering drug brand-to-generic name equivalences, that required knowing in advance exactly which requests would be illogical. It’s not a solution to the problem at hand.

In real clinical deployment, illogical requests do not come labeled. Patients don’t know their question contains a flawed premise. And that’s the entire problem. How do you expect a patient, who doesn’t know that acetaminophen and Tylenol are the same drug, to know their question is medically invalid before they ask it? The fine-tuning approach requires anticipating every possible category of patient error, which is impossible at scale.

The studies also woefully understate the regulatory vacuum. These tools are general-purpose AI products. They are not regulated as medical devices by the FDA. There’s no:

- Mandatory adverse event reporting requirement when an AI-generated recommendation contributes to patient harm.

- Required validation against the specific patient population that will use them.

- Requirement to disclose AI involvement in a clinical recommendation to the patient receiving it.

The Washington Post article similarly understates the regulatory vacuum. The studies were conducted on general-purpose AI tools, the piece notes, adding that companies are "working to enhance their health capabilities." That framing suggests the problem is a work in progress rather than what it actually is: a deployment without standards, without validation, without mandatory adverse event reporting, and without any requirement to disclose when the AI contributed to a negative outcome.

Think it about this way. We have regulatory frameworks for aspirin but none for a system that 40 million people use daily for clinical guidance? Make it make sense.

The Algorithmic Bias Layer Nobody Mentions

Both studies focused on accuracy and sycophancy. Neither addressed what is, in my view, the more urgent, less visible risk: these models encode and amplify the biases of their training data, and in healthcare, those biases have documented, measurable, lethal consequences.

A landmark 2019 study published in Science by Ziad Obermeyer, Brian Powers, Christine Vogeli, and Sendhil Mullainathan examined a widely deployed commercial algorithm used by health systems to identify patients for high-risk care management programs. The algorithm determined patient risk using healthcare costs as a proxy for health needs. Because Black patients historically spend less on healthcare due to systemic barriers, the algorithm systematically underestimated their health needs relative to white patients at the same risk score. The result: only 17.7% of patients automatically identified for additional care were Black. Correcting the algorithm to use actual health conditions as the predictor would have increased that to 46.5%. The algorithm was in use at health systems across the country.

The Duke University Health System's experience building a childhood sepsis prediction algorithm is equally instructive, though the outcome was different. Researchers discovered that Hispanic children at Duke waited 1-2 hours longer than white children to receive blood tests when later diagnosed with sepsis. They feared this human-physician delay had been encoded into the algorithm's training data, which could mean the algorithm would also predict sepsis later for Hispanic patients. The team spent eight weeks of additional rigorous testing to determine whether the bias had been absorbed. While testing proved, it had not, it’s most likely because Duke had treated too few pediatric sepsis cases for the delay pattern to be learned. The team lead, Dr. Mark Sendak, described the result as "more sobering than a relief." "I don't find it comforting that in one specific rare case, we didn't have to intervene to prevent bias," he said. "Every time you become aware of a potential flaw, there's that responsibility of, 'Where else is this happening?'"

Remember, those were purpose-built clinical AI systems with institutional oversight, IRB review, and clinical validation processes. The consumer chatbots that 40 million people use daily have none of that. Their training data includes the full range of biases embedded in the internet, in medical literature that historically underrepresented women and people of color, and in clinical guidelines built on populations that do not reflect the patients now using these tools.

And that all so important question of, "where else is this happening," is the one the consumer AI industry isn’t asking before deployment. From my perspective, that’s the patient safety issue with the longest tail and the least visibility because it does not show up in a single lawsuit. No, it shows up in population-level health outcomes years from now, in data nobody will be able to trace back to a single AI recommendation a patient received on a Tuesday night when their clinic was closed.

ECRI addressed this directly in their 2026 report: "AI models reflect the knowledge and beliefs on which they are trained, biases and all. If healthcare stakeholders are not careful, AI could further entrench the disparities that many have worked for decades to eliminate from health systems."

What You Should Actually Do About This

If you work in healthcare, lead a health system, or advise organizations deploying AI in clinical contexts, here are the questions you should be asking and answering before any tool goes live.

- Is this tool validated for the specific clinical use case you are deploying it in? Not validated for "healthcare." Validated for this use case, in this patient population, with documented performance metrics. If the vendor cannot produce a clinical validation study, you are straight up running one on your patients.

- Does your deployment have adverse event reporting? For example, if a patient is harmed following AI-generated guidance, do you have a mechanism to capture that, investigate it, and report it? Because without that you have no way to know when your AI is failing, and no way to correct it.

- What is your consent framework? Sharp HealthCare is in active litigation right now partly because patients were not told their conversations were being recorded, transmitted and processed by a third-party AI system. Here’s the rub, that third party is training its AI system on your patient’s data. Did they consent to that? Did you update your privacy practices, business associate agreements to explicitly consent use for training? Here’s a prime example.Atlassian notified all its users “starting August 17, it will collect metadata and in-app data from Jira and Confluence to train AI models. Free, Standard, and Premium users cannot opt out of metadata collection. Enterprise customers and those with specific compliance requirements remain exempt from these mandatory data harvesting policies.” The choice with Atlassian is clear. If you want to use our platform, your data will be used for training otherwise, don’t use it. While not as serious as healthcare, consent for AI-assisted care is a legal requirement in multiple jurisdictions and that list is expanding.

- Who owns the liability when the AI is wrong? The hospital? The vendor? The attending physician who relied on it? Get that in writing before deployment, not after a patient outcome requires you to find out the hard way.

- Have you audited the tool for disparate performance across demographic groups? Not across the general population. Across YOUR patient population, broken down by race, ethnicity, age, and socioeconomic status. If the vendor has not run those tests, don’t assume the tool performs equally for everyone walking through your doors. The Obermeyer study found a widely used algorithm's racial bias was invisible until someone specifically went looking for it. The question is not whether your tool has been tested. It is whether it has been tested on patient populations that reflect yours.

The Unasked Question

Every study, every ECRI report, and every news article, circles the same underlying problem without landing on it directly.

We deployed consumer AI into healthcare without building the governance infrastructure first.

The tools came.

The adoption came.

The revenue came.

The oversight did not.

This is not a new story in technology. It’s the same one-sided story with a wink and a smile we saw with social media and mental health, with algorithmic lending and credit discrimination, with predictive policing and racial bias. The pattern is consistent. Deploy first, discover the harm second, send thoughts and prayers, regulate third, and by the time regulation arrives, the harm is systemic.

In healthcare, systemic harm has a body count.

The researchers from Harvard, MIT, and Johns Hopkins built and tested a mitigation approach for one narrow category of error: drug name equivalences. Ther are thousands of ways a patient can submit a medically flawed question to an AI. The research has addressed a small fraction of them, meanwhile, 40 million people are not waiting.

If you are responsible for AI deployment in any capacity that touches health information, the question isn’t whether to use AI. The question is whether you can account for and defend every decision in your deployment when the first plaintiff walks through your door.

Based on everything we know right now, that day is already here. The only variable is whether you were prepared for it.

Share this article

Related Articles

The Reskilling Illusion: When AI Transformation Means "You're Fired"

Oct 03, 2025