Cloudflare Wants to Secure Your AI Future. Read Their Incident Reports First.

Cloudflare's Chief Security Officer, Grant Bourzikas, recently published a blog post called "Preparing for the Future of AI in Cybersecurity." It is polished. It is confident. It tells you to think 5, 10, 20 years ahead. It tells you to consolidate vendors. It ends with a link to download a guide and a pitch to build AI applications on Cloudflare's global network.

It does not mention that Cloudflare caused five major self-inflicted outages in under a year.

This is not a story about one company. This is a story about an entire industry where the vendors writing your security playbook have a financial interest in what it says. And most security leaders are reading the thought leadership without reading the incident reports.

That needs to stop.

The Blog Says One Thing. The Record Says Another.

On November 18, 2025, a database permissions change at Cloudflare doubled the size of a configuration file used by their Bot Management system. That file propagated to every server on their global network. The servers crashed. For nearly six hours, roughly 20% of the web went dark.

X.

ChatGPT.

Spotify.

Shopify.

Canva.

Even Downdetector, the site people use to check if things are down, was down.

The root cause was not a cyberattack. It was a database change nobody validated before it hit production.

Seventeen days later, on December 5, a WAF configuration change propagated through the same system they had just promised to fix. Another outage. Their own post-mortem admitted the corrective measures from November had not been completed.

In July 2025, their DNS resolver 1.1.1.1 went offline globally for over an hour due to a legacy BGP routing misconfiguration.

In September, a React bug in their dashboard caused an infinite loop that effectively DDoS'd their own APIs.

In March, an engineer rotated R2 storage credentials and deployed the new keys to a development environment instead of production. 100% of write operations failed.

And in early 2025, third-party vendors Salesloft and Drift were compromised, exposing Cloudflare customer data through a supply chain attack, one of 700+ organizations hit through the same OAuth token compromise.

Five incidents.

All internal process failures. Zero caused by the sophisticated AI-driven threats the blog post warns about.

Now re-read Bourzikas’ blog again. He writes about modernizing security. About autonomous SOCs. About AI-driven malware that will learn from defensive methods and modify its attack. The future is scary and complex and you need help navigating it.

Cloudflare would like to be that help.

The Credibility Test Your Vendor Is Hoping You Skip

Every security vendor publishes thought leadership. White papers. Blog posts. Conference keynotes. Guides with titles like "Ensuring Safe AI Practices." This content is positioned as industry guidance. It functions as a sales funnel. And the gap between those two things is where the real risk lives for security leaders.

Here is the test most buyers never run.

When a vendor tells you what the future of security looks like, compare it to what their last 12 months actually looked like. Pull their incident reports. Read their post-mortems. Look at what broke, why it broke, and whether the corrective actions were completed before the next failure.

Cloudflare's blog tells you to consolidate vendors and work with "four or five key partners." Their incident history shows what happens when one of those partners has a bad Tuesday. Security journalist Brian Krebs reported that some organizations pivoting away from Cloudflare during the November outage exposed themselves to malicious traffic because they had no independent defenses. They had consolidated. And that consolidation became concentration risk.

Let me be clear about what "consolidate vendors" means when Cloudflare says it. It means use Cloudflare. They want to be your one-stop shop. CDN, DNS, WAF, bot management, zero trust, AI deployment. The recommendation is not born from concern for your security posture. It is born from a revenue model that benefits when you put everything under one roof. Their roof. Don’t get me wrong, Bourzikas isn’t wrong about the complexities that come with managing 50 vendors. But the solution he’s selling? It has nothing to do with simplification; it’s straight up dependency.

Cloudflare's blog tells you to "implement the right technologies and tools" with "built-in AI and ML capabilities." Their own built-in ML capability, Bot Management, is the system that crashed their entire network when a feature file doubled in size.

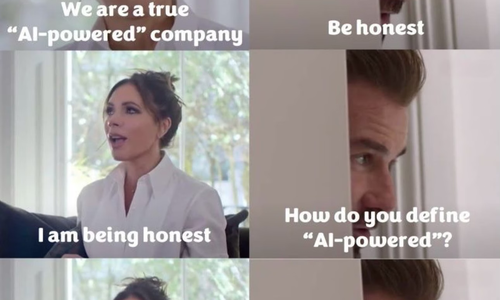

Cloudflare's blog tells you that "true AI inference is not yet available" and that "models cannot yet draw conclusions or generate meaningful insights from live data." Their own products depend on ML inference. Their bot scoring system runs inference on every request traversing their network. Telling your audience the technology is not ready while your revenue depends on it is not a timing disagreement. That sounds more like a credibility problem.

The baseball analogy in the blog is the most revealing moment. Bourzikas describes a model that found "at-bats" as the top predictor of Hall of Fame induction and warns readers not to take model output at face value without understanding the meaning. That’s solid advice. Now apply it to vendor content. The output says "prepare for the AI future with Cloudflare." The meaning says "we would like you to buy our products."

What Vendor Thought Leadership Is Actually Optimized For

This is not a Cloudflare-specific problem. It is an industry-wide pattern. The companies most aggressively publishing AI security guidance are the same companies selling AI security products. The incentive structure guarantees a specific conclusion: the future is complicated, the threats are advanced, and you need what we are selling.

Here is the part nobody wants to say out loud.

There is no industry consensus on what a good security posture looks like in the world of AI-driven malware, autonomous attack chains, and adversarial model manipulation.

None, nada, bupkis.

In traditional infosec, we have a common understanding of what "good" looks like. There are established frameworks, proven architectures, and reputable vendors, Cisco, Fortinet, Palo Alto, Arista, who built credibility over decades (regardless how shaky some of that credibility has become recently).

We know the fundamentals. We can measure against them.

Here’s the thing, AI security has none of that yet. No shared benchmarks. No agreed-upon baselines. No mature frameworks for evaluating whether a vendor's AI security product actually works or simply exists. We are in the Wild West part of this story, where every vendor is vying to be top dog (inducted into the Hall of Fame) and will say anything to anyone if it grows their market share while the market is still fleshing out. The play is simple: get while the getting is good, worry about tomorrow, well, tomorrow. YOLO!

And that’s the context in which Cloudflare publishes a blog telling you to consolidate around them and plan for the AI future on their platform.

What gets left out is equally predictable.

No vendor white paper will tell you that their own change management process failed repeatedly. No vendor keynote will mention that their tool's blast radius wiped out services for millions of users. No vendor guide will recommend that you build independent defenses against the possibility that they, the vendor, will be the cause of your next outage.

That silence is strategic. And if you are a security leader building your strategy from vendor content without cross-referencing it against vendor performance, you are making decisions with half the picture.

Verizon's 2025 Data Breach Investigations Report found that 60% of breaches involved human error. Every Cloudflare incident in 2025 was human error. Their blog post mentions it zero times.

Cloudflare tracked 230 billion threats per day in 2025 and blocked 47.1 million DDoS attacks. Impressive numbers. They also deployed credentials to a dev environment instead of production, failed to complete corrective actions between outages, and got breached through their own vendor supply chain. Both things are true. Only one of them makes it into the blog post.

How to Read Vendor Content Like an Auditor

Blake, Carl and I have audited more than 500 organizations and AI systems. The pattern I see most often is not a lack of tools, no because they have ton of them, but it’s misplaced trust in the people selling the tools. So, here’s how to read vendor thought leadership without getting sold:

- Pull the incident history. Every major cloud and security vendor publishes post-mortems. Read them. Look for patterns. Cloudflare's pattern is clear: internal configuration changes propagating globally without validation. If a vendor's post-mortems keep citing the same root cause category, their corrective actions are not working. And that tells you more about their operational maturity than any blog post or white paper will.

- Compare the claims to the product. If a vendor says AI inference "is not yet available" while selling products built on AI inference, you are reading marketing copy, not analysis. Check what the product actually does against what the thought leadership claims the industry can do. Gaps between those two statements reveal where the vendor is managing your expectations to serve their roadmap.

- Stress-test the consolidation pitch. When a vendor recommends you consolidate around fewer partners, ask what happens when they go down. Ask them directly and in writing. Ask for their uptime numbers, their mean time to recovery, and their blast radius containment strategy. If November 18 had been your production environment, what was your recovery plan? If they can’t answer that clearly, consolidation is concentration risk wearing a better but still ill-fitting suit.

- Evaluate who benefits from the recommendation. Every piece of vendor content has a call to action. Find it. Then ask whether the recommendation would still make sense if it came from someone who had nothing to sell. Cloudflare's advice to understand AI technology and identify business use cases is sound but you could also find it in any analyst report from the last 3 years. The specific, differentiating insight Cloudflare could share, lessons from their own failures, is the part they left out.

- Check the correction timeline. Cloudflare's December 5 post-mortem stated that the fixes promised after November 18 had not been deployed. That is a 17-day gap between a public commitment and reality. When a vendor promises corrective action, check whether it actually happened. Public post-mortems are promises. Follow-through is the only metric that actually matters.

The Question That Should Follow Every Vendor Keynote

The next time a vendor CSO stands on stage and tells you about the future of AI in cybersecurity, ask one question.

“What broke at your company in the last 12 months, and what did you change because of it?”

If they can’t answer that openly, they are not advising you. They are performing for you. And there is a meaningful difference between a vendor who has earned the right to advise you through demonstrated operational excellence and a vendor who writes about problems they haven’t yet solved internally.

Cloudflare's blog isn’t wrong about everything.

Are The threats real? Yes.

Will AI reshape security operations? Yes

Do organizations need a long-term strategy? Absolutely.

But remember this, the messenger matters. And when the messenger is simultaneously selling you the solution, you owe it to your organization and your customers, to verify before you trust by writing a check.

So, read the thought leadership and then read the incident reports. The distance between those two documents is the distance between what a vendor wants you to believe and what is actually true.

And that distance is where YOUR risk lives.

Share this article

Related Articles

The Reskilling Illusion: When AI Transformation Means "You're Fired"

Oct 03, 2025